Your AI cloud bill is going up.

Every month.

OpenAI hiked prices twice in 2025.

Anthropic added rate limits.

Your monthly API spend is now a line item the finance team asks about.

OpenMythos just dropped with a radically different architecture.

Fewer parameters.

More loops.

Lower compute costs.

Is this the end of your runaway AI bills?

Honestly? Maybe. Eventually.

But you have to understand the real maths first.

OpenMythos — The Honest Caveat First

OpenMythos is not the real Claude Mythos.

Kai Gomez built a theoretical reconstruction in PyTorch.

No Anthropic weights, code, or data.

The architecture idea is real.

The specific model is experimental.

Performance will not match what Anthropic would have shipped.

Keep that in mind as we talk costs.

The cost savings argument is about the architecture pattern.

Not about this specific repo replacing your Claude API.

Not yet anyway.

Why AI Costs Are Spiralling

Every business owner using AI has noticed.

Input tokens cheaper.

Output tokens expensive.

Long context destroys your budget.

Fine-tuning is a luxury.

Hosting your own is a nightmare.

The pricing structure of closed APIs pushes you into a corner.

Use less and get worse results.

Use more and bleed cash.

The industry has been trapped in "make the model bigger" for five years.

Bigger means more parameters.

More parameters means more compute.

More compute means higher prices for you.

OpenMythos bets on breaking that loop.

Want to understand exactly how to manage AI spend in your business? Join the AI Profit Boardroom.

The OpenMythos Recurrent Depth Cost Argument

Here is the key idea.

Normal transformers add layers to get smarter.

Each layer adds parameters.

Each parameter adds compute.

Recurrent depth transformers loop through the same layers.

Same parameters, used multiple times.

Easy question? One loop.

Hard question? Ten loops.

Adaptive compute.

Here is why that changes the cost maths.

Parameters cost memory.

Loops cost only compute time on the question that needs them.

You stop paying for huge static parameter counts on every single query.

You only pay for extra compute when a query actually needs it.

This is a big deal.

Where The Savings Actually Come From

Let me break it down concretely.

Big model: 500 billion parameters. Needs 8 H100s. Hundreds of dollars an hour.

Small recurrent depth model: 10 billion parameters. Fits on 1 GPU. $1-5 an hour.

Easy queries on the small model: 1 loop, blazing fast.

Hard queries on the small model: 10 loops, still cheaper than the big model.

Net result?

If the architecture delivers what it promises, inference costs drop 10-50x.

That is the savings pitch.

The Reality Check On Those Savings

Now the honest part.

Those savings assume the architecture matches closed model performance.

OpenMythos almost certainly does not.

The real Claude Mythos probably does.

The gap between theoretical architecture and trained production model is massive.

Training data quality.

Reward modeling.

Reinforcement learning.

Engineering optimisations.

Anthropic has all of those.

OpenMythos has a guess at the architecture.

So the 10-50x savings claim holds only for whoever eventually trains a recurrent depth model properly on good data.

Probably Anthropic themselves.

Possibly DeepSeek, Meta, or a well-funded open source team.

OpenMythos is a signal, not a product.

But here is the good news for business owners.

When this architecture lands in production APIs, prices will drop.

Claude, GPT, and Gemini will all adopt similar ideas.

Your API bill will come down naturally in 12-24 months.

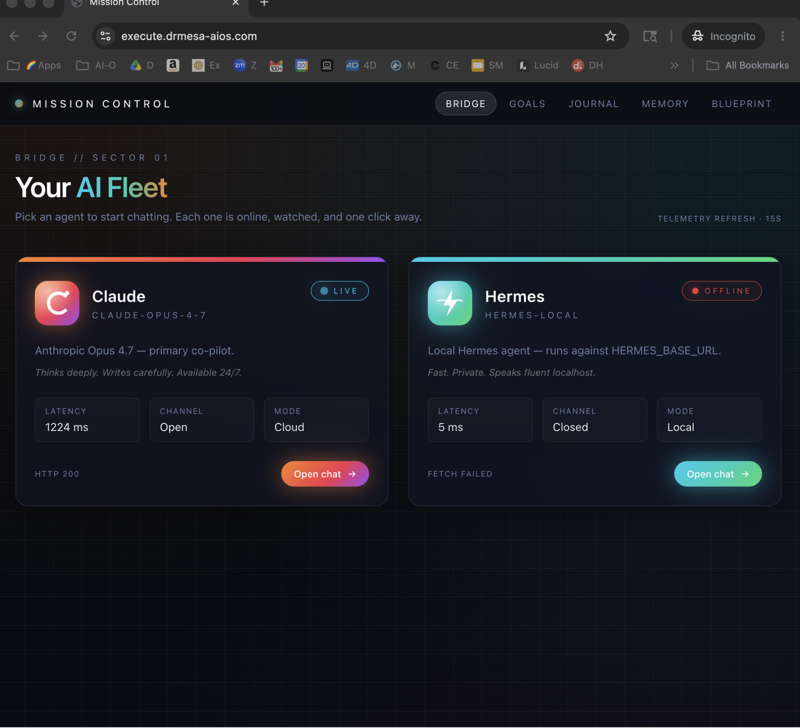

Read my Claude Opus 4.7 review and ChatGPT agent tutorial to see how commercial models are evolving.

The Self-Hosting Cost Maths

Let me give you the real numbers for self-hosting.

Hardware: $3,000-$10,000 for a GPU box capable of running mid-size models.

Or $500-$2,000 per month for cloud GPUs.

Setup: 40-80 hours of engineer time.

Maintenance: 10-20 hours per month.

Opportunity cost: you are not working on the business.

Now compare to API pricing.

$100-$2,000 per month for most small businesses.

No setup.

No maintenance.

Compare honestly.

For most businesses spending under $1,000 a month on AI APIs, self-hosting costs more.

The break-even point is usually around $2,000-$5,000 monthly API spend.

Once you cross that, self-hosting with an open source model makes financial sense.

If you are below that, stay on the API.

Scale the business first.

Optimise infrastructure later.

I break the full economics down inside the AI Profit Boardroom.

Three Smart Ways To Cut AI Costs Today

Stop waiting for OpenMythos-style savings.

Here is what works right now.

One. Use cheaper models for cheap tasks.

Claude Haiku and GPT-4o mini are 10-20x cheaper than Opus and GPT-4.

Most tasks do not need the flagship model.

Two. Prompt cache aggressively.

Anthropic's prompt caching can cut costs 90% on workflows with repeated context.

Use it.

Three. Batch your requests.

Many APIs offer 50% discounts for batch processing.

If it does not need to be real time, batch it.

These three moves alone can cut your AI bill in half without changing your stack.

Check my AI automation for small business breakdown for specific implementations.

When OpenMythos Architecture Will Matter To You

Here is the timeline I expect.

Q2 2026: OpenMythos and copycats make headlines. Hype builds.

Q3-Q4 2026: Anthropic and OpenAI adopt recurrent depth ideas internally.

Q1-Q2 2027: Commercial models using adaptive compute ship with lower pricing.

Q3 2027 onwards: Your API bills drop 30-50% naturally as efficiency improvements pass through.

That is my honest forecast.

The architecture bet is real.

The savings are real.

The timeline is 12-24 months away from hitting your bottom line.

In the meantime, do the three cost-cutting moves above.

Do not self-host unless you are over $2k-5k monthly spend.

Do not bet on OpenMythos specifically.

Do bet on the pattern of recurrent depth architecture winning.

Want to future-proof your AI cost structure? Come inside the AI Profit Boardroom and I will show you exactly how.

The Bigger Cost Picture

Let me zoom out.

AI costs per useful output have been dropping 50-80% per year since 2022.

GPT-3 pricing was brutal.

GPT-4 was cheaper on quality-adjusted terms.

GPT-4o was cheaper still.

Claude Haiku 3.5 costs pennies.

The trend is relentless.

OpenMythos is one more data point.

Architecture innovation plus competition drives prices toward zero marginal cost.

If you are building a business on top of AI, this is incredible news.

Your unit economics improve every year without you doing anything.

But only if you do not lock yourself into expensive choices.

Stay flexible.

Stay on APIs.

Swap providers when the numbers make sense.

Build your moat in workflows and data.

Not in infrastructure.

FAQ

Does OpenMythos actually cut my AI costs today? No. It is an experimental reconstruction by Kai Gomez. Your production costs will not change from using it directly.

When will recurrent depth architecture save me money on APIs? My estimate is 12-24 months. Once Anthropic, OpenAI, and Google adopt these ideas, pricing will fall.

Should I self-host OpenMythos to save money? Only if you are spending more than $2,000-$5,000 a month on API calls and have technical skills in-house.

What are the best ways to cut AI costs right now? Use cheaper models for easy tasks, use prompt caching, and batch requests where possible.

Is OpenMythos the real Claude Mythos? No. Kai Gomez built a theoretical reconstruction. Anthropic did not release weights, code, or data.

How much can AI cost drop in the next 2 years? Historically 50-80% per year on quality-adjusted pricing. I expect that trend to continue through 2027.