OpenClaw Kimi K2.6 is how I'd run a free AI agent today if I was starting completely from scratch.

No subscription.

No credit card drama.

Just Ollama, OpenClaw, and a model designed specifically for agent work.

I'll show you the exact setup I use, the real tests I ran this week, and the one pricing gotcha nobody warns you about.

Why The OpenClaw Kimi K2.6 Combo Works

Most "free AI agent" tutorials are either stale or the underlying model can't actually use tools properly.

Kimi K2.6 is the fix.

It's an open-source agentic model.

"Agentic" means it's trained to call tools, chain steps, and make decisions — not just blabber text.

Kimi's own blog called out OpenClaw and Hermes as the two shells it's built to pair with.

When the model's team hands you the rulebook, you follow it.

The OpenClaw Kimi K2.6 Setup In 4 Steps

Step 1: Run the One-Click Setup Command

Grab the one-click Ollama setup command.

Paste it into terminal.

Hit enter.

Installs Ollama fresh or updates you to the latest version if you've already got it.

Skip this update and Kimi K2.6 cloud won't show up in Models.

That's the most common reason people get stuck.

Step 2: Find Kimi K2.6 in Models

Open Ollama.

Click Models.

Find Kimi K2.6.

Open-source.

Agentic.

Free within token limits.

Step 3: Pull or Run With OpenClaw

Two paths.

- Pull the model into Ollama, then point OpenClaw at it.

- Run it through OpenClaw directly.

I go with option two.

Fewer clicks.

Same destination.

Step 4: Check the Gateway in OpenClaw

Running the model starts the gateway.

Open OpenClaw.

Confirm the gateway's running.

That's it.

Works straight away.

Same gateway pattern from my Ollama + Hermes walkthrough — once you've done it once, every future shell feels familiar.

Real Tests This Week

Didn't want to write theory.

So I used OpenClaw Kimi K2.6 on live work.

Test 1: "Research the web for the latest AI news today."

Three news releases.

Clean summary.

Back in seconds.

No robotic filler.

Test 2: "Check what happened today in AI automation."

Grabbed the web tool.

Pulled fresh info.

Reported back properly.

This is the agent behaviour you're supposed to get from an agentic model — and most of them still fumble it.

Kimi K2.6 doesn't.

The Pricing Trap

Here's the part I want you to actually remember.

Kimi K2.6 = cloud model.

Free within Ollama's token limits.

Push it hard and you'll hit the cap unless you're on Ollama's upgraded plan.

Gemma 4 = local model.

Unlimited usage when running locally.

Grab it from ollama.com > Models > Gemma 4.

The rule I live by:

- Use Kimi K2.6 for agent tasks — tool calls, research, multi-step jobs.

- Use Gemma 4 for volume — bulk writing, high-frequency tasks, anything you'd run 500 times a day.

That's how you get unlimited AI horsepower without paying a subscription.

🔥 Want every agent stack I run, step by step? Inside the AI Profit Boardroom, I've got the full 6-hour OpenClaw course, a 2-hour Hermes course, daily classroom tutorials, and weekly coaching calls where I debug your setup live on screen. 3,000+ members running this exact stack right now. → Get access to the full training here

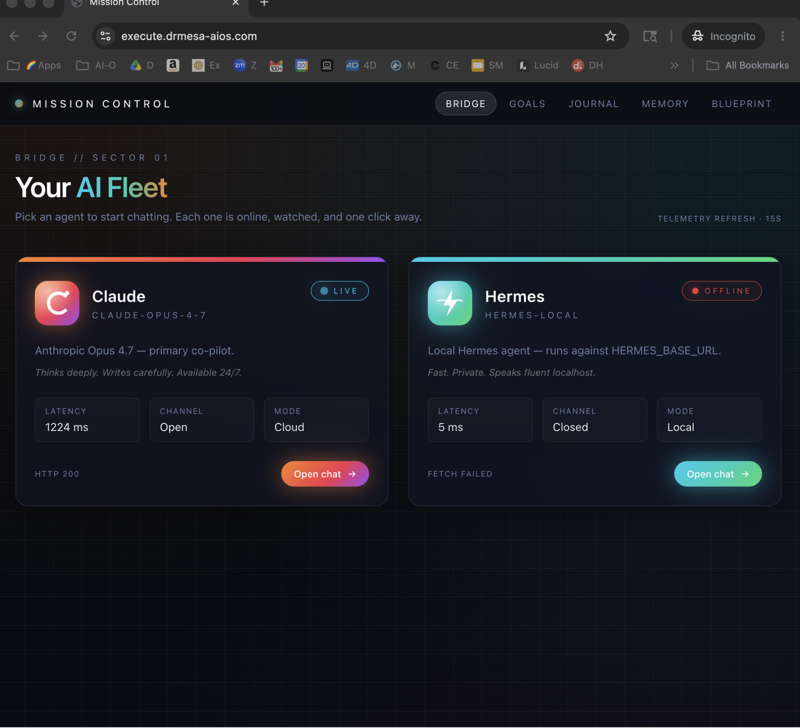

Hermes: The Other Option

OpenClaw isn't mandatory.

Kimi's team also called out Hermes as a blessed shell.

Hermes recently got Ollama support baked in.

To run Kimi with Hermes:

- Install Hermes with Ollama support.

- Type

launch Hermesin terminal. - On setup, select Kimi.

Done.

If you're deciding between them, the Hermes vs OpenClaw breakdown lays out which one fits which workflow.

Quick take: OpenClaw for tight agent runs, Hermes for when you want the full workspace feel.

Atomic Chat: No Terminal Needed

Honest observation.

Most people aren't technical enough to enjoy terminals.

Terminals make everything harder for probably 80% of readers.

That's where Atomic Chat comes in.

Same tools.

Same setup.

Proper UI.

Kimi K2.6 cloud sitting right there in the model list.

Dropdown to swap models on the fly.

Here's the step-by-step:

- Go to Atomic Chat.

- Click AI Models > API Keys.

- Find Kimi K2.6 cloud.

- Grab your API key from Ollama Settings > Keys.

- In Atomic Chat, select Ollama in the local model section.

- Pick Kimi K2.6 cloud.

- Hit Save.

- Go to Dashboard > Chat.

- Paste the Ollama API key into the local models section.

- Save again.

- Select Kimi K2.6 cloud Ollama from the dropdown.

- Start sending messages.

Zero terminal.

Full agent capability.

This is what I'd recommend to anyone who isn't building automations.

What Else Kimi K2.6 Plays With

Kimi K2.6 also works with:

- OpenCode

- Codex

- Claude Code

But setup is fastest with OpenClaw or Hermes — Kimi's own team said so, and they'd know.

If you already run an OpenClaw + Byterover pipeline, adding Kimi K2.6 is a model swap — no pipeline rebuild needed.

Common Mistakes to Avoid

Seen these trip up every single beginner in the community.

- Not updating Ollama. Old version, no Kimi cloud option.

- Confusing cloud with local. Kimi = cloud (limited). Gemma 4 = local (unlimited).

- Forgetting to check the gateway. No gateway, no agent.

- Missing the API key step in Atomic Chat. No Ollama key = no Kimi cloud access.

The OpenClaw 4.20 update notes are worth a read too — some of the recent shell changes matter for how Kimi routes through.

My Honest Take

OpenClaw Kimi K2.6 is the free agent stack I'd run on day one if I was starting over.

Fast setup.

Agentic model.

Clean output.

Non-terminal option through Atomic Chat.

Only real trade-off: cloud token limits.

Fix that by pairing with Gemma 4 local for bulk work.

FAQ: OpenClaw Kimi K2.6

Is OpenClaw Kimi K2.6 beginner-friendly?

Yes — especially through Atomic Chat, which gives you a full GUI instead of a terminal.

You paste your Ollama API key in, pick Kimi K2.6 cloud, and you're chatting with an agent in under 5 minutes.

Why is Kimi K2.6 called "agentic"?

Because it's trained specifically to call tools and execute multi-step workflows rather than just generate chat replies.

That's the architectural difference that makes it useful inside OpenClaw.

How much does OpenClaw Kimi K2.6 cost?

Free within Ollama's cloud token limits.

Heavy daily usage needs Ollama's upgraded plan.

Or pair Kimi K2.6 with Gemma 4 local for unlimited work on bulk tasks.

Does OpenClaw Kimi K2.6 work on Windows and Mac?

Yes — both OpenClaw and Ollama run on Mac, Windows, and Linux.

The one-click setup command handles install on any of them.

Can I run Kimi K2.6 offline?

No — it's a cloud model.

For offline/unlimited, run Gemma 4 local through Ollama.

What's the fastest way to test OpenClaw Kimi K2.6 is working?

Fire it with: "Research the web for the latest AI news today."

If it returns fresh news releases cleanly, the gateway, model, and web tool are all wired up correctly.

Related Reading

- Hermes vs OpenClaw — pick your shell.

- OpenClaw 4.20 update — latest shell changes.

- OpenClaw + Byterover — slot Kimi into a bigger pipeline.

🎯 Ready to actually use this stuff instead of just bookmarking tutorials? The AI Profit Boardroom gives you the complete OpenClaw course, Hermes course, daily tutorials, weekly live coaching, and a map to connect with AI folks in your city running these same agents. 3,000+ members. Everything you need to win with AI agents. → Join the Boardroom

Video notes + links to the tools 👉 https://www.skool.com/ai-profit-lab-7462/about

Learn how I make these videos 👉 https://aiprofitboardroom.com/

Get a FREE AI Course + Community + 1,000 AI Agents 👉 https://www.skool.com/ai-seo-with-julian-goldie-1553/about

Start your first agent today — OpenClaw Kimi K2.6.