DeepSeek V4 Ollama is the closest thing to free money I've found in AI this year — a frontier model, no GPU required, no API bill, running in your terminal in four minutes.

I'll show you how I cut my AI subscriptions to nearly zero with this exact setup.

Six months ago I was spending around £200/month on AI tools.

ChatGPT Plus. Claude Pro. A couple of API keys for custom tooling. A premium browser-automation service.

Today that bill is closer to £20.

The single biggest swap that made it possible? DeepSeek V4 Ollama.

Let me show you exactly what happened.

The Old Stack Vs The New DeepSeek V4 Ollama Stack

Old monthly spend:

- ChatGPT Plus: £20

- Claude Pro: £20

- Premium API keys for various models: £100+

- Browser automation SaaS: £40

Total: ~£180-£220/month

New monthly spend:

- DeepSeek V4 Ollama: £0 (cloud model, free tier)

- Claude Code with Ollama backend: £0

- OpenClaw: £0 (open source)

- Hermes: £0 (open source)

- Codex with Ollama backend: £0

- Open Code: £0

- One premium API for client deliverables: ~£20

Total: ~£20/month

That's £180/month back in my pocket. £2,160 a year.

And honestly, the work output is the same or better.

How DeepSeek V4 Ollama Pulls This Off

Here's the trick — DeepSeek V4 Flash isn't a normal Ollama model.

Normal Ollama = download huge weights, run on your hardware, need a GPU for anything decent.

DeepSeek V4 Flash = cloud model through Ollama. The model lives on Ollama's servers. Your machine just talks to it via the standard ollama run command.

Net effect:

- Zero hardware requirements

- Zero download time

- Zero local resources used

- Free tier with usage limits that genuinely cover most workflows

This is the bit that flips the economics. Before, "free local AI" meant "buy a £3000 machine first". Now it means "install Ollama, type one command, done".

The Four-Minute DeepSeek V4 Ollama Setup

Get Ollama:

curl -fsSL https://ollama.com/install.sh | sh

Run DeepSeek V4 Flash:

ollama run deepseek-v4-flash

That's it. You're chatting with DeepSeek V4.

Quirk to expect — it thinks in Chinese internally, replies in English. Looks weird the first time. Doesn't affect quality at all.

If you want a deeper walkthrough on the model itself, my DeepSeek V4 tutorial covers the background.

🔥 Want to see the full free-stack setup with all the configs? Inside the AI Profit Boardroom I've documented every harness config pointing at DeepSeek V4 Ollama — Claude Code, OpenClaw, Hermes, Codex. Step-by-step videos, weekly coaching, 3,000+ members building the same stack. → Get access here

Wiring Up the Free Agent Stack

The model alone is just a chatbot. The stack is where the real money-saving happens.

Open new terminal tabs with Cmd+T. Pop a few of them. Now you can run all of these in parallel:

Claude Code → DeepSeek V4 Ollama

Claude Code's agent loop pointed at the free model. I do all my refactoring sessions here now. Used to burn £15-20/day in API costs. Now zero.

OpenClaw → DeepSeek V4 Ollama

Browser automation that used to cost £40/month on a SaaS. I told it "go speak to ChatGPT" and it opened ChatGPT in my browser instantly. Live browser control, model-driven, free.

If you want to see this combo in action with a different model first, the OpenClaw with Kimi K2.6 breakdown is a good warm-up.

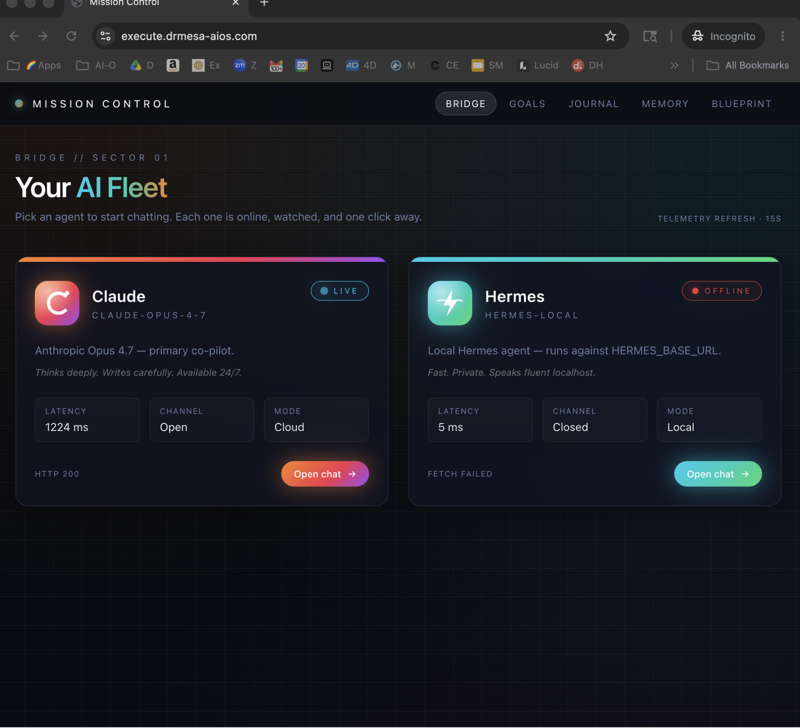

Hermes → DeepSeek V4 Ollama

Scheduled agents that just run forever. My daily news research agent runs at 9am every day with zero ongoing cost. Replaced a £30/month newsletter intelligence tool I was paying for.

Codex → DeepSeek V4 Ollama

OpenAI's CLI but pointed at the free DeepSeek V4 brain. Built me a working ping pong game in five minutes for free. Same task on raw OpenAI API would have cost a few quid in tokens.

Open Code → DeepSeek V4 Ollama

Built a full blog page locally. The kind of thing I'd have used a paid tool for last year.

The Hidden Cost That Disappears

Here's the bit nobody talks about.

It's not just the £180/month subscription savings.

It's the mental tax of every API call.

When you're paying per token, you start rationing prompts. "Is this question worth £0.20?" "Should I really run this through Claude?"

When the model is free, you stop counting.

You experiment more. You iterate faster. You build things you wouldn't have bothered building before.

That mental shift is worth more than the £180.

What You Still Pay For

Be honest — there are things I still pay for.

- One premium API for high-stakes client deliverables where I want absolute top-tier output

- Hosting for the agents themselves (basically nothing)

- Domain names and infrastructure for projects I ship

That's about £20/month total.

Everything else? DeepSeek V4 Ollama.

The Money Question Nobody Asks

"What if Ollama starts charging?"

Possible. Free tiers don't last forever.

But here's the thing — even if they do, you're still ahead.

The harnesses (Claude Code, OpenClaw, Hermes, Codex) are open source. They work with any model.

The day Ollama monetises, you swap to whichever free or cheap model is hot at the time. The harnesses don't care.

That's the durable insight — own the harness, rent the model. Models cycle every six months. Harnesses last for years.

My Recommendation If You're Spending £100+/month on AI

Stop. Today.

Spend the next thirty minutes installing Ollama and running DeepSeek V4 Flash.

Wire it into Claude Code first — easiest wins are there. The walkthrough on Claude Code free setup covers the basics.

Then add OpenClaw or Hermes depending on what kind of work you do most.

Within a week you'll have replaced 60-80% of your paid AI usage.

Within a month you'll wonder why you ever paid.

Want help cutting your AI bill to near-zero? Inside the AI Profit Boardroom I've recorded the exact swap-out path — what to cancel, what to install, what to wire in. Plus weekly calls where I'll audit YOUR stack live. → Join the Boardroom

Related reading

- DeepSeek V4 Tutorial — the model deep-dive

- Claude Code Free — the harness setup

- OpenClaw with Kimi K2.6 — same harness, different model

FAQ

Does DeepSeek V4 Ollama really cost nothing?

Within the free tier limits, yes. The free tier is generous — for solo operators it's enough for daily heavy use. If you push thousands of requests per day, you may hit limits and need a paid tier.

Will the DeepSeek V4 Ollama free tier last forever?

Honestly, no idea. Free tiers shift over time. While it's free, I'm using it heavily. If it monetises, I'll swap to whatever the next free model is — the harnesses don't care which model they call.

Can DeepSeek V4 Ollama really replace ChatGPT Plus?

For me, yes. The model is comparable for most tasks, and once it's wired into Claude Code or OpenClaw, you've got more agentic capability than the ChatGPT web app gives you anyway.

What about for client work — is DeepSeek V4 Ollama production-ready?

Depends on the client. For internal work and most agency deliverables, absolutely. For high-stakes regulated work, I still use a premium model with a clear audit trail.

Do I need any specific hardware for DeepSeek V4 Ollama?

No. That's the whole point of the cloud model. A five-year-old laptop will run it because the actual inference happens on Ollama's servers, not yours.

How much can I really save with DeepSeek V4 Ollama?

I cut £160-£180/month off my AI bills. Your number depends on what you're spending now. If you're at £100+/month, expect 70-90% savings within a month of switching.

Get a FREE AI Course + Community + 1,000 AI Agents 👉 Join here

Video notes + links to the tools 👉 Boardroom

Learn how I make these videos 👉 aiprofitboardroom.com

DeepSeek V4 Ollama is the single biggest cost-cutting move I've made in my AI stack this year — install it tonight and watch your monthly bills disappear.